Trigger Warning: This article contains mentions of sexual abuse and exploitation.

Imagine the first humans from the Garden of Eden: clothed only in fig leaves, in a quiet bid for privacy, suddenly thrust into the age of Artificial Intelligence (AI). With a few keystrokes, a machine could render them naked. And as history tells us, exposure and the male gaze would soon find their familiar address.

Eve stands little chance in a world that still treats her body as public terrain.

Technology is often framed as neutral, yet bodies reveal otherwise. A single command to X’s (formerly Twitter) Grok AI can generate sexualized imagery with ease. And it is women’s and children’s bodies that bear the brunt of this capability.

Much like Eve in the creation story, women are rendered more vulnerable, more visible and more violable. The issue is not only what AI can do, but whose bodies it is programmed, permitted and encouraged to undress.

In recent safeguarding efforts, Department of Information and Communications Technology (DICT) Secretary Henry Aguda sought to block access to Grok AI in the Philippines, as it poses risks of sexual exploitation of women and minors. This measure was lifted less than a week later, as xAI, the developer of Grok, promised “self-regulation.”

Under the mandate of the Cybercrime Prevention Act of 2012, steps to combat AI’s continued violation of women’s and children’s bodies are still being taken.

Yet child protection groups warn that such interventions barely slow down what has long been spreading. The Internet Watch Foundation has warned that generative AI tools are increasingly functioning as “child sexual abuse machines.” In 2025 alone, the organization recorded 312,030 reports of online sexual abuse material all over the globe, with 3,440 AI-generated videos involving children.

As we enter a digital age that blurs the lines between efficiency and exploitation, a burning question persists: do these measures ever address the rampant sexual abuse of women and children?

As the fig leaves fall

Eve’s body was not created to be a spectacle nor was she for consumption.

She existed only as herself: bare but never public.

AI beckons a power accessible yet unaccountable, whether used for efficiency or malice, its distant commands leave one deeply vulnerable. Eve’s body can no longer simply exist — nude or clothed — and these violations are not merely symbolic.

The sexual exploitation of women and children has long been a crisis in the digital world. As such, the Philippines is often dubbed a global “center” for online sexual abuse material.

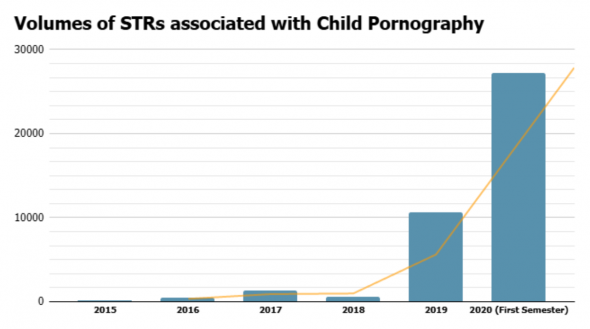

From 2015 to 2018, the Anti-Money Laundering Council (AMLC) recorded 2,611 suspicious transaction reports (STRs) related to child pornography. This number saw a stark increase in 2019, reaching 10,627 cases and later doubling to 27,217 by the first semester of 2020, attributed to the pandemic-induced lockdown.

Researchers recorded that roughly 68% of women in the country had faced online harassment on social media in 2020.

Children remain consistent targets. The Commission on Human Rights (CHR) revealed that there were 1.297 million incidents of children being sexually exploited online by the end of 2020. These numbers continued to rise, doubling to 2.7 million incidents as 2023 concluded.

The CHR later called on government agencies to improve their reporting, rehabilitation and psychological interventions for long-term support and prevention of further abuse. The lack of enforced support not only increases the likelihood of these crimes but also deepens the untreated trauma of survivors.

Government agencies are mandated to maintain the strict implementation of the Anti-Child Pornography Act of 2009, with the assurance of sufficient care and recovery for the victims. Yet, the surge in statistics displays how existing policies to combat online sexual abuse struggle to keep up with the rapid advancements in technology.

Today, AI embeds itself in the internet’s norms, evolving from a mere tool for convenience to an increasingly weaponized one. It has not only perpetuated abuse, but it has also fostered an entirely new kind of digital criminal.

The CHR posited that despite steps being taken to improve the governance of AI use, these measures have been “fragmented and unevenly implemented.” Coupled with the intensifying risks of online sexual exploitation, the present stagnation of policies leaves an even more glaring gap in the capacity of these safeguards.

Erwin Alampay, a professor from the National College of Public Administration (NCPAG), acknowledged this widening gap. “Kadalasan ‘yong mga batas ay nahuhuli. Nauuna yung technology before regulation.”

This lag leads to gray areas in AI regulations, which obscure how we identify what truly constitutes “adequate” policies.

Alampay said a crucial task of these regulations is “to determine the parameters of what is ‘acceptable use.’” The lack of clearly defined criteria in these mandates contributes to its uneven enforcement.

“Importante, I think, to hear [that] there’s some [public] participation dun sa proseso ng pagve-vet [ng frameworks] as to [kung] ano ba yung acceptable [use] and hindi,” Alampay underscored to ensure the regulations reflect the realities lived by the people it seeks to protect.

“Importante ring maintindihan ng mga tao kung anong implication pag gumawa ka ng ganito [AI generation],” he added.

This call for responsible AI use is not new. In 2024, amid the rapid rise of generative AI-driven sexual exploitation cases overseas, Council for the Welfare of Children executive director Angelo Tapales warned of the growing risk of the technology being used to sexually abuse children.

Tapales specifically cautioned against parents posting their children’s pictures online to avoid unwanted AI manipulation and instill early knowledge of internet safety

With its pervasive accessibility, AI has now become the main tool for both drawing in new victims and extending into the digital sphere the sexual abuse many women have already lived through.

Firewalls built after fire

By the time fig leaves are offered, the fruits have already been picked. The damage is done, all of which is irreversible.

As AI-generated abuse continues to surge, platforms respond with measures that seem to

fail faster than they arrive. X announced on January that Grok AI would block the generation of images of real people in bikinis or underwear in jurisdictions where such content is illegal, only to reiterate that only paid users can edit images.

Framed as protection, these measures remain selective — fragmented by geography, legality and access, rather than grounded by the lived realities of those harmed.

What shifted is not only the volume of abuse but also the ease with which it is produced. AI tools are becoming increasingly accessible to perpetrators, while legal and regulatory frameworks struggle to keep pace.

In the Philippines, officials have cautioned against focusing on Grok alone as lewd deepfakes circulate across platforms. Restricting one tool does not dismantle the system of abuse; it merely redirects it elsewhere.

Even more troubling is how these technical half-measures are shielded by ideology. As the owner of X and developer of Grok AI, Elon Musk directly shapes the platform’s approach to content moderation and safety.

By framing Grok as a defender of “free speech” and portraying restrictions as threats rather than responsibilities, Musk influences the very rules – or lack thereof – that govern the circulation of AI-generated sexual content. Labeling AI-generated sexual abuse as “speech” outrightly erases the violence embedded in its creation and circulation.

Under this logic, the right to generate content outweighs the right not to be violated, collapsing consent into code. Women and children’s bodies become collateral damage, visible and violable in a digital playground designed to prioritize ideology over safety.

In the age of AI, it has become more challenging to trace the perpetrators. Was it the user who typed the prompt? The platform that hosted the tool? The engineers who trained the model on scraped images?

Whose hand is stained with blood and should be placed on the scales of justice?

For victims, however, there is little ambiguity. They know what has been taken, what has circulated and what cannot be fully erased once automation becomes moral camouflage and violence persists without fingerprints. What else could the promise of firewalls offer in the face of ashes and victims whose dignity has been stripped, pixelated and replicated?

By the time safeguards are introduced, the images have already spread. By the time policies are debated, the trauma has already settled.

What remains are the consequences of delays, loopholes and diluted responsibility. The figs, once ripe with possibility, are left bruised on the branch and burdened by others’ inaction.

From fig leaves to firewalls, the tools have changed but the gaze has not.

Millenia have passed since the Garden of Eden, yet the burden of exposure remains unevenly borne. Fig leaves have evolved into firewalls — thin, brittle, drafted in neutrality. These walls rise slowly, patched after breach and weakened by compromise. Even so, the gaze wastes no time; it just expands wherever oversight falters.

With the story of visibility being rewritten in pixels, no covering could outlast a single prompt. The gaze returns to its oldest habit of claiming what it sees and measuring what it does not own. Eve does not need another metaphor. She needs restraint placed on the systems that render her visible.

Until then, the question remains: whether Eve will continue to be looked at, or finally, looked after.

Help & Reporting Hotlines in the Philippines:

UP Diliman Office of Anti-Sexual Harassment (OASH)

6/F Student Union Building, UP Diliman, Quezon City 1101

(02) 8981-8500 local 2645, 2466 or 2473

National Bureau of Investigation (NBI)

Anti-Violence Against Women and Children Division

Taft Avenue, Manila

Hotline: (02) 8525-6028

Council for the Welfare of Children (CWC)

Makabata Helpline: 0915-8022-375 / 0960-3779-863

UNICEF Philippines

Makabata Helpline: 1383

Or message Makabata Helpline on Facebook

Philippine Commission on Women Hotlines

Resources per Region